New technology is any recently developed or newly applied tool, system, or method that changes how people work, communicate, build products, or deliver services, and the key point is not “newness,” it’s whether it meaningfully improves outcomes like speed, cost, safety, accuracy, or customer experience.

If you feel like every week brings another “must-adopt” platform, model, device, or framework, you’re not imagining it, emerging tech trends move fast, and the marketing around them often moves faster than real-world adoption.

This guide strips away the buzzwords, you’ll get a practical definition, examples of cutting-edge innovations that actually show up in US workplaces, a quick checklist to tell “interesting” from “useful,” and a realistic path to adoption that does not blow up your budget or your team’s patience.

What “new technology” really means (and what it doesn’t)

In practice, “new” rarely means “invented yesterday,” many modern tech advancements are older ideas becoming usable because compute got cheaper, sensors got better, or regulation and standards finally caught up.

Two simple ways to define new technology without getting lost in hype:

- New-to-the-world: breakthrough inventions that create capabilities that did not exist in any practical form, for example certain medical devices, novel materials, or new kinds of AI models.

- New-to-you: next-gen solutions that are proven elsewhere but new in your organization, like moving from on-prem servers to cloud, adopting zero trust security, or modernizing a data stack.

What it is not, at least not automatically, is “anything with AI in the name” or “the newest app your competitor posted about.” Disruptive innovation can be real, but it can also be a label slapped on incremental upgrades.

According to the National Institute of Standards and Technology (NIST), effective technology programs rely on clear risk management and measurable controls, which is a polite way of saying you need definitions, guardrails, and accountability before you scale anything.

Why emerging tech trends feel overwhelming in the US right now

Technology adoption in the US is shaped by a mix of fast-moving markets and uneven constraints, some industries can pilot quickly, others move slower because safety, compliance, procurement, or legacy systems set the pace.

These are the usual reasons people feel whiplash:

- Short product cycles: advanced digital tools ship updates constantly, so “current” becomes “behind” in months.

- Vendor noise: many “innovative tech solutions” differ mainly in packaging, pricing, or integrations.

- Infrastructure gaps: without clean data, identity management, or cloud governance, even good tools fail.

- Workforce reality: a tool that needs heavy training can stall, even if it looks impressive in demos.

One more thing people underestimate, internal readiness often matters more than how “cutting-edge” the tech looks. If your process is messy, new tools can make the mess faster.

Common categories of cutting-edge innovations (with grounded examples)

It helps to bucket new technology by what it changes. Here are categories that show up across many US teams, with examples that are common enough to be worth understanding.

Advanced digital tools for automation and decision-making

- AI copilots and assistants for drafting, summarizing, coding, customer support triage, and internal knowledge search.

- Workflow automation that connects apps, routes approvals, and reduces manual copy/paste work.

Next-gen solutions for connectivity and computing

- Edge computing for faster local processing in retail, manufacturing, logistics, and healthcare environments.

- Private 5G in facilities that need reliable connectivity beyond Wi‑Fi.

Breakthrough inventions in physical systems

- Robotics for repetitive tasks in warehouses, labs, and light manufacturing.

- New sensor tech for monitoring equipment health, energy use, or environmental conditions.

Future-ready technology for security and trust

- Zero trust architectures, identity-first access, continuous verification.

- Modern encryption and key management for cloud systems and sensitive data.

According to the Cybersecurity and Infrastructure Security Agency (CISA), reducing risk depends heavily on basics like asset visibility, secure configuration, and vulnerability management, which is why “cool” tools rarely compensate for missing fundamentals.

A quick self-check: is this new technology worth your time?

If you only take one thing from this article, make it this, evaluate new technology like an operator, not a spectator. A simple checklist beats a long debate.

- Clear job-to-be-done: can you describe the problem in one sentence without naming the tool?

- Measurable outcome: cost per ticket, cycle time, error rate, conversion rate, uptime, churn, claims processing time.

- Data readiness: do you have permissioned data, consistent IDs, and a source of truth?

- Integration reality: does it connect to your core systems without fragile workarounds?

- Operational ownership: who maintains it after the pilot, and what is the weekly effort?

- Risk and compliance fit: privacy, security controls, procurement, vendor terms, auditability.

- User adoption likelihood: will real users pick it up, or will it require constant pushing?

If you struggle to answer two or three of these, it does not mean “don’t adopt,” it usually means “slow down and define the scope,” because vague pilots tend to become expensive experiments.

Adoption playbook: from curiosity to a working rollout

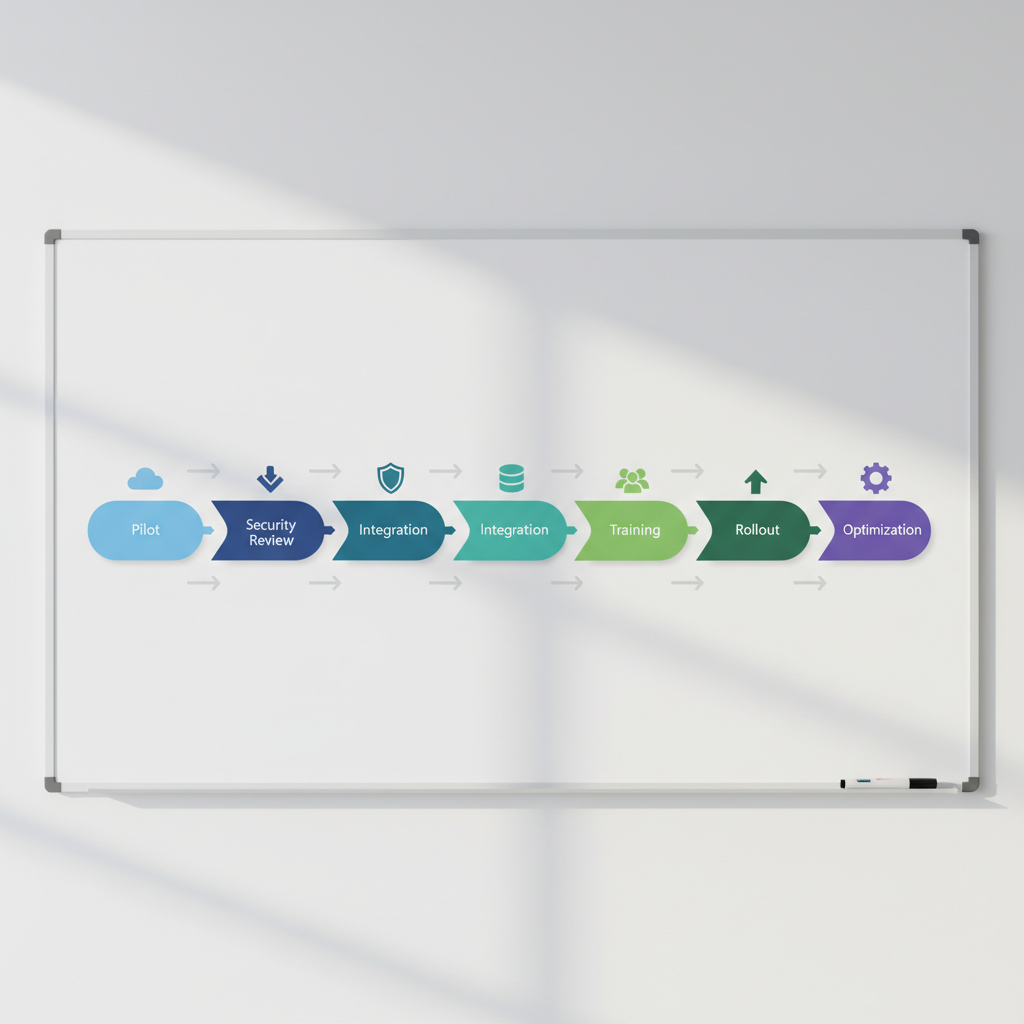

Technology adoption in the US often fails in the middle, not at the start, teams get a proof-of-concept running and then hit security reviews, messy data, training gaps, and unclear ownership. A staged approach keeps momentum while limiting downside.

1) Start with a narrow pilot that reflects real work

- Pick one workflow, one team, and one “before vs after” metric.

- Define what “success” looks like and what would make you stop.

- Use production-like constraints, sample data and toy tasks can be misleading.

2) Build guardrails early (especially with AI and automation)

- Decide what data the tool can access, and what must stay off-limits.

- Require logging and audit trails for sensitive workflows.

- Document who can change prompts, rules, or automation logic.

3) Plan for the unglamorous work: integration, training, support

- Integration: prioritize the 2–3 systems that matter most, ignore “nice-to-have” connectors at first.

- Training: write a one-page “how we use it here” guide, people adopt faster with local norms.

- Support: create a simple intake channel for issues and feature requests, even a shared form helps.

4) Scale only when the operating model is stable

- Confirm cost behavior under load, some tools price per use and surprise you later.

- Lock in roles: admin, security owner, data owner, and business owner.

- Set a review cadence, what gets checked monthly vs quarterly.

According to the U.S. Small Business Administration (SBA), strong planning and cash-flow awareness are central to sustainable operations, and that mindset translates directly to tech rollouts, know your ongoing costs, not just the pilot price.

Comparison table: picking the right “modern tech advancement” for your situation

This table is intentionally practical, it focuses on fit, not hype, because the right choice depends on your constraints.

| Need | What to consider | Good fit examples | Watch-outs |

|---|---|---|---|

| Reduce manual busywork | Process clarity, exception handling, audit logs | Workflow automation, AI triage | Automating a broken process just scales errors |

| Faster customer response | Knowledge base quality, escalation rules | Chatbots with human handoff, agent assist | Hallucinations or wrong answers can hurt trust |

| Better security posture | Identity management, asset inventory | Zero trust, endpoint management | Tool sprawl without governance adds risk |

| Real-time operations visibility | Sensor coverage, connectivity, data pipeline | IoT monitoring, edge analytics | Data volume and maintenance can balloon |

| Modernize legacy systems | Migration strategy, downtime tolerance | Cloud platforms, managed databases | Lift-and-shift without refactoring may disappoint |

Common mistakes that make innovative tech solutions backfire

A lot of “new technology didn’t work” stories are really “we skipped the boring parts” stories. These are the patterns that show up again and again.

- Buying before defining: a tool becomes the strategy, instead of serving one.

- No owner after launch: pilots have champions, rollouts need operators.

- Ignoring change management: if workflows change, you need training and expectations, not just access.

- Underestimating security reviews: vendor risk, data handling, and identity controls can slow timelines.

- Chasing disruptive innovation everywhere: sometimes the best ROI comes from boring upgrades like monitoring, backups, and patching.

If health, safety, or critical infrastructure is involved, be conservative with automation and recommendations, and consider getting guidance from qualified professionals because risk tolerance varies by setting and regulation.

Key takeaways and next steps

New technology can be a growth lever or an expensive distraction, the difference usually comes down to fit, readiness, and disciplined rollout. If you want a clean next step, pick one workflow, set one metric, run a time-boxed pilot, then decide whether you scale or stop.

Action ideas you can do this week:

- Write a one-sentence problem statement that does not mention any product name.

- Choose one measurable outcome, and baseline it before you test anything.

- List the systems and data the tool must touch, then loop in IT/security early.

FAQ

- What is considered new technology?

Usually it means a tool or approach that is newly created or newly applied at scale, the practical test is whether it introduces a meaningful capability or improvement in real workflows. - How do I tell hype from real cutting-edge innovations?

If the vendor cannot define measurable outcomes, integration requirements, and ongoing operating effort, treat it as hype until proven otherwise, credible tools are specific about limits and tradeoffs. - Are emerging tech trends the same as new technology?

Not exactly, trends describe what’s gaining attention, while new technology is the actual capability, some trends fade before they deliver durable value. - What are examples of next-gen solutions for small businesses?

Many small teams start with cloud-based collaboration, basic automation, modern endpoint security, and AI assistance for drafting and support, these often require less custom infrastructure than robotics or IoT. - How should technology adoption in the US handle privacy?

Map what data is collected, where it flows, who can access it, and how long it’s retained, then align with your industry obligations and vendor terms, when stakes are high, consult legal or compliance experts. - What is disruptive innovation in plain English?

It’s a change that reshapes a market by making something cheaper, easier, or more accessible, but in day-to-day work, not every “disruptive” label translates into immediate ROI for your organization. - How long should a pilot run before scaling?

Long enough to capture real usage and edge cases, but short enough to avoid drift, many teams use a few weeks to a couple months, depending on complexity, security review timing, and data availability.

If you’re evaluating a future-ready technology and want a second set of eyes, a lightweight assessment can help you clarify the use case, success metrics, and rollout risks before you commit budget or ask your team to change how they work.